YAML

YAML is a human friendly data serialization standard, especially for configuration files. Its simple to read and use.

Here is an example:

---

# A list of tasty fruits

fruits:

- Apple

- Orange

- Strawberry

- Mangobtw the latest version of yaml is: v1.2.

PyYAML

Working with yaml files in python is really easy. The python module: PyYAML must be installed in the system.

In an archlinux box, the system-wide installation of this python package, can be done by typing:

$ sudo pacman -S --noconfirm python-yaml

Python3 - Yaml Example

Save the above yaml example to a file, eg. fruits.yml

Open the Python3 Interpreter and write:

$ python3.6

Python 3.6.4 (default, Jan 5 2018, 02:35:40)

[GCC 7.2.1 20171224] on linux

Type "help", "copyright", "credits" or "license" for more information.>>> from yaml import load

>>> print(load(open("fruits.yml")))

{'fruits': ['Apple', 'Orange', 'Strawberry', 'Mango']}

>>>

an alternative way is to write the above commands to a python file:

from yaml import load

print(load(open("fruits.yml")))and run it from the console:

$ python3 test.py

{'fruits': ['Apple', 'Orange', 'Strawberry', 'Mango']}Instead of print we can use yaml dump:

eg.

import yaml

yaml.dump(yaml.load(open("fruits.yml")))

'fruits: [Apple, Orange, Strawberry, Mango]n'The return type of yaml.load is a python dictionary:

type(load(open("fruits.yml")))

<class 'dict'>Have that in mind.

Jinja2

Jinja2 is a modern and designer-friendly templating language for Python.

As a template engine, we can use jinja2 to build complex markup (or even text) output, really fast and efficient.

Here is an jinja2 template example:

I like these tasty fruits:

* {{ fruit }}where {{ fruit }} is a variable.

Declaring the fruit variable with some value and the jinja2 template can generate the prefarable output.

python-jinja

In an archlinux box, the system-wide installation of this python package, can be done by typing:

$ sudo pacman -S --noconfirm python-jinja

Python3 - Jinja2 Example

Below is a python3 - jinja2 example:

import jinja2

template = jinja2.Template("""

I like these tasty fruits:

* {{ fruit }}

""")

data = "Apple"

print(template.render(fruit=data))The output of this example is:

I like these tasty fruits:

* AppleFile Template

Reading the jinja2 template from a template file, is a little more complicated than before. Building the jinja2 enviroment is step one:

env = jinja2.Environment(loader=jinja2.FileSystemLoader("./"))and Jinja2 is ready to read the template file:

template = env.get_template("t.j2")The template file: t.j2 is a litle diferrent than before:

I like these tasty fruits:

{% for fruit in fruits -%}

* {{ fruit }}

{% endfor %}Yaml, Jinja2 and Python3

To render the template a dict of global variables must be passed. And parsing the yaml file the yaml.load returns a dictionary! So everything are in place.

Compine everything together:

from yaml import load

from jinja2 import Environment, FileSystemLoader

mydata = (load(open("fruits.yml")))

env = Environment(loader=FileSystemLoader("./"))

template = env.get_template("t.j2")

print(template.render(mydata))and the result is:

$ python3 test.py

I like these tasty fruits:

* Apple

* Orange

* Strawberry

* Mango

A few years ago, I migrated from ICS Bind Authoritative Server to PowerDNS Authoritative Server.

Here was my configuration file:

# egrep -v '^$|#' /etc/pdns/pdns.conf

dname-processing=yes

launch=bind

bind-config=/etc/pdns/named.conf

local-address=MY_IPv4_ADDRESS

local-ipv6=MY_IPv6_ADDRESS

setgid=pdns

setuid=pdnsΑ quick reminder, a DNS server is running on tcp/udp port53.

I use dnsdist (a highly DNS-, DoS- and abuse-aware loadbalancer) in-front of my pdns-auth, so my configuration file has a small change:

local-address=127.0.0.1

local-port=5353instead of local-address, local-ipv6

You can also use pdns without dnsdist.

My named.conf looks like this:

# cat /etc/pdns/named.conf

zone "balaskas.gr" IN {

type master;

file "/etc/pdns/var/balaskas.gr";

};So in just a few minutes of work, bind was no more.

You can read more on the subject here: Migrating to PowerDNS.

Converting from Bind zone files to SQLite3

PowerDNS has many features and many Backends. To use some of these features (like the HTTP API json/rest api for automation, I suggest converting to the sqlite3 backend, especially for personal or SOHO use. The PowerDNS documentation is really simple and straight-forward: SQLite3 backend

Installation

Install the generic sqlite3 backend.

On a CentOS machine type:

# yum -y install pdns-backend-sqlite

Directory

Create the directory in which we will build and store the sqlite database file:

# mkdir -pv /var/lib/pdns

Schema

You can find the initial sqlite3 schema here:

/usr/share/doc/pdns/schema.sqlite3.sql

you can also review the sqlite3 database schema from github

If you cant find the schema.sqlite3.sql file, you can always download it from the web:

# curl -L -o /var/lib/pdns/schema.sqlite3.sql \

https://raw.githubusercontent.com/PowerDNS/pdns/master/modules/gsqlite3backend/schema.sqlite3.sqlCreate the database

Time to create the database file:

# cat /usr/share/doc/pdns/schema.sqlite3.sql | sqlite3 /var/lib/pdns/pdns.db

Migrating from files

Now the difficult part:

# zone2sql --named-conf=/etc/pdns/named.conf -gsqlite | sqlite3 /var/lib/pdns/pdns.db

100% done

7 domains were fully parsed, containing 89 recordsMigrating from files - an alternative way

If you have already switched to the generic sql backend on your powerdns auth setup, then you can use: pdnsutil load-zone command.

# pdnsutil load-zone balaskas.gr /etc/pdns/var/balaskas.gr

Mar 20 19:35:34 Reading random entropy from '/dev/urandom'

Creating 'balaskas.gr'Permissions

If you dont want to read error messages like the below:

sqlite needs to write extra files when writing to a db file

give your powerdns user permissions on the directory:

# chown -R pdns:pdns /var/lib/pdns

Configuration

Last thing, make the appropriate changes on the pdns.conf file:

## launch=bind

## bind-config=/etc/pdns/named.conf

launch=gsqlite3

gsqlite3-database=/var/lib/pdns/pdns.db

Reload Service

Restarting powerdns daemon:

# service pdns restart

Restarting PowerDNS authoritative nameserver: stopping and waiting..done

Starting PowerDNS authoritative nameserver: started

Verify

# dig @127.0.0.1 -p 5353 -t soa balaskas.gr +short

ns14.balaskas.gr. evaggelos.balaskas.gr. 2018020107 14400 7200 1209600 86400or

# dig @ns14.balaskas.gr. -t soa balaskas.gr +short

ns14.balaskas.gr. evaggelos.balaskas.gr. 2018020107 14400 7200 1209600 86400perfect!

Using the API

Having a database as pdns backend, means that we can use the PowerDNS API.

Enable the API

In the pdns core configuration file: /etc/pdns/pdns.conf enable the API and dont forget to type a key.

api=yes

api-key=0123456789ABCDEF

The API key is used for authorization, by sending it through the http headers.

reload the service.

Testing API

Using curl :

# curl -s -H 'X-API-Key: 0123456789ABCDEF' http://127.0.0.1:8081/api/v1/servers

The output is in json format, so it is prefable to use jq

# curl -s -H 'X-API-Key: 0123456789ABCDEF' http://127.0.0.1:8081/api/v1/servers | jq .

[

{

"zones_url": "/api/v1/servers/localhost/zones{/zone}",

"version": "4.1.1",

"url": "/api/v1/servers/localhost",

"type": "Server",

"id": "localhost",

"daemon_type": "authoritative",

"config_url": "/api/v1/servers/localhost/config{/config_setting}"

}

]

jq can also filter the output:

# curl -s -H 'X-API-Key: 0123456789ABCDEF' http://127.0.0.1:8081/api/v1/servers | jq .[].version

"4.1.1"Zones

Getting the entire zone from the database and view all the Resource Records - sets:

# curl -s -H 'X-API-Key: 0123456789ABCDEF' http://127.0.0.1:8081/api/v1/servers/localhost/zones/balaskas.gr

or just getting the serial:

# curl -s -H 'X-API-Key: 0123456789ABCDEF' http://127.0.0.1:8081/api/v1/servers/localhost/zones/balaskas.gr | \

jq .serial

2018020107or getting the content of SOA type:

# curl -s -H 'X-API-Key: 0123456789ABCDEF' http://127.0.0.1:8081/api/v1/servers/localhost/zones/balaskas.gr | \

jq '.rrsets[] | select( .type | contains("SOA")).records[].content '

"ns14.balaskas.gr. evaggelos.balaskas.gr. 2018020107 14400 7200 1209600 86400"

Records

Creating or updating records is also trivial.

Create the Resource Record set in json format:

# cat > /tmp/test.text <<EOF

{

"rrsets": [

{

"name": "test.balaskas.gr.",

"type": "TXT",

"ttl": 86400,

"changetype": "REPLACE",

"records": [

{

"content": ""Test, this is a test ! "",

"disabled": false

}

]

}

]

}

EOF

and use the http Patch method to send it through the API:

# curl -s -X PATCH -H 'X-API-Key: 0123456789ABCDEF' --data @/tmp/test.text \

http://127.0.0.1:8081/api/v1/servers/localhost/zones/balaskas.gr | jq . Verify Record

We can use dig internal:

# dig -t TXT test.balaskas.gr @127.0.0.1 -p 5353 +short

"Test, this is a test ! "querying public dns servers:

$ dig test.balaskas.gr txt +short @8.8.8.8

"Test, this is a test ! "

$ dig test.balaskas.gr txt +short @9.9.9.9

"Test, this is a test ! "

or via the api:

# curl -s -H 'X-API-Key: 0123456789ABCDEF' http://127.0.0.1:8081/api/v1/servers/localhost/zones/balaskas.gr | \

jq '.rrsets[].records[] | select (.content | contains("test")).content'

""Test, this is a test ! ""That’s it.

ACME v2 and Wildcard Certificate Support is Live

We have some good news, letsencrypt support wildcard certificates! For more details click here.

The key phrase on the post is this:

Certbot has ACME v2 support since Version 0.22.0.

unfortunately -at this momment- using certbot on a centos6 is not so trivial, so here is an alternative approach using:

acme.sh

acme.sh is a pure Unix shell script implementing ACME client protocol.

# curl -LO https://github.com/Neilpang/acme.sh/archive/2.7.7.tar.gz

# tar xf 2.7.7.tar.gz# cd acme.sh-2.7.7/

[acme.sh-2.7.7]# ./acme.sh --version

https://github.com/Neilpang/acme.sh

v2.7.7PowerDNS

I have my own Authoritative Na,e Server based on powerdns software.

PowerDNS has an API for direct control, also a built-in web server for statistics.

To enable these features make the appropriate changes to pdns.conf

api=yes

api-key=0123456789ABCDEF

webserver-port=8081and restart your pdns service.

To read more about these capabilities, click here: Built-in Webserver and HTTP API

testing the API:

# curl -s -H 'X-API-Key: 0123456789ABCDEF' http://127.0.0.1:8081/api/v1/servers/localhost | jq .

{

"zones_url": "/api/v1/servers/localhost/zones{/zone}",

"version": "4.1.1",

"url": "/api/v1/servers/localhost",

"type": "Server",

"id": "localhost",

"daemon_type": "authoritative",

"config_url": "/api/v1/servers/localhost/config{/config_setting}"

}Enviroment

export PDNS_Url="http://127.0.0.1:8081"

export PDNS_ServerId="localhost"

export PDNS_Token="0123456789ABCDEF"

export PDNS_Ttl=60

Prepare Destination

I want to save the certificates under /etc/letsencrypt directory.

By default, acme.sh will save certificate files under /root/.acme.sh/balaskas.gr/ path.

I use selinux and I want to save them under /etc and on similar directory as before, so:

# mkdir -pv /etc/letsencrypt/acme.sh/balaskas.gr/

Create WildCard Certificate

Run:

# ./acme.sh

--issue

--dns dns_pdns

--dnssleep 30

-f

-d balaskas.gr

-d *.balaskas.gr

--cert-file /etc/letsencrypt/acme.sh/balaskas.gr/cert.pem

--key-file /etc/letsencrypt/acme.sh/balaskas.gr/privkey.pem

--ca-file /etc/letsencrypt/acme.sh/balaskas.gr/ca.pem

--fullchain-file /etc/letsencrypt/acme.sh/balaskas.gr/fullchain.pemHSTS

Using HTTP Strict Transport Security means that the browsers probably already know that you are using a single certificate for your domains. So, you need to add every domain in your wildcard certificate.

Web Server

Change your VirtualHost

from something like this:

SSLCertificateFile /etc/letsencrypt/live/balaskas.gr/cert.pem

SSLCertificateKeyFile /etc/letsencrypt/live/balaskas.gr/privkey.pem

Include /etc/letsencrypt/options-ssl-apache.conf

SSLCertificateChainFile /etc/letsencrypt/live/balaskas.gr/chain.pemto something like this:

SSLCertificateFile /etc/letsencrypt/acme.sh/balaskas.gr/cert.pem

SSLCertificateKeyFile /etc/letsencrypt/acme.sh/balaskas.gr/privkey.pem

Include /etc/letsencrypt/options-ssl-apache.conf

SSLCertificateChainFile /etc/letsencrypt/acme.sh/balaskas.gr/fullchain.pemand restart your web server.

Browser

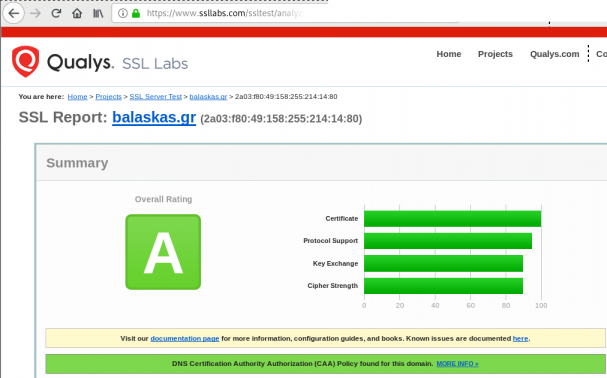

Quallys

Validation

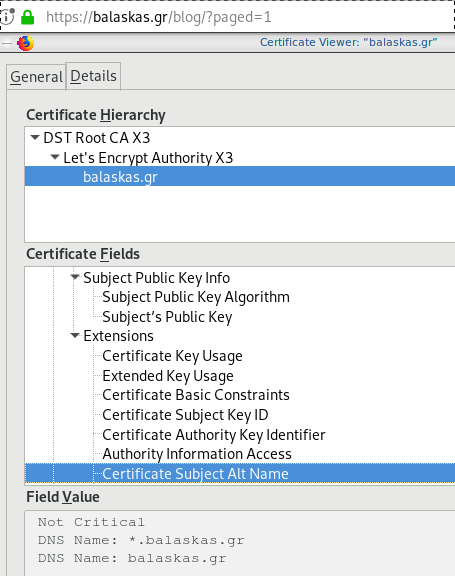

X509v3 Subject Alternative Name

# openssl x509 -text -in /etc/letsencrypt/acme.sh/balaskas.gr/cert.pem | egrep balaskas

Subject: CN=balaskas.gr

DNS:*.balaskas.gr, DNS:balaskas.grContinuous Deployment with GitLab: how to build and deploy a RPM Package with GitLab CI

I would like to automate building custom rpm packages with gitlab using their CI/CD functionality. This article is a documentation of my personal notes on the matter.

[updated: 2018-03-20 gitlab-runner Possible Problems]

Installation

You can find notes on how to install gitlab-community-edition here: Installation methods for GitLab. If you are like me, then you dont run a shell script on you machines unless you are absolutely sure what it does. Assuming you read script.rpm.sh and you are on a CentOS 7 machine, you can follow the notes below and install gitlab-ce manually:

Import gitlab PGP keys

# rpm --import https://packages.gitlab.com/gitlab/gitlab-ce/gpgkey

# rpm --import https://packages.gitlab.com/gitlab/gitlab-ce/gpgkey/gitlab-gitlab-ce-3D645A26AB9FBD22.pub.gpgGitlab repo

# curl -s 'https://packages.gitlab.com/install/repositories/gitlab/gitlab-ce/config_file.repo?os=centos&dist=7&source=script' \

-o /etc/yum.repos.d/gitlab-ce.repo Install Gitlab

# yum -y install gitlab-ceConfiguration File

The gitlab core configuration file is /etc/gitlab/gitlab.rb

Remember that every time you make a change, you need to reconfigure gitlab:

# gitlab-ctl reconfigureMy VM’s IP is: 192.168.122.131. Update the external_url to use the same IP or add a new entry on your hosts file (eg. /etc/hosts).

external_url 'http://gitlab.example.com'Run: gitlab-ctl reconfigure for updates to take effect.

Firewall

To access the GitLab dashboard from your lan, you have to configure your firewall appropriately.

You can do this in many ways:

-

Accept everything on your http service

# firewall-cmd --permanent --add-service=http -

Accept your lan:

# firewall-cmd --permanent --add-source=192.168.122.0/24 -

Accept only tcp IPv4 traffic from a specific lan

# firewall-cmd --permanent --direct --add-rule ipv4 filter INPUT 0 -p tcp -s 192.168.0.0/16 -j ACCEPT

or you can complete stop firewalld (but not recommended)

- Stop your firewall

# systemctl stop firewalld

okay, I think you’ve got the idea.

Reload your firewalld after every change on it’s zones/sources/rules.

# firewall-cmd --reload

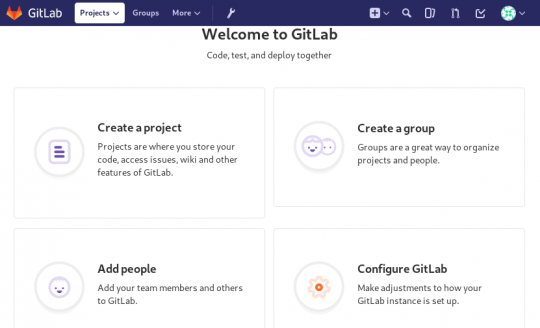

successBrowser

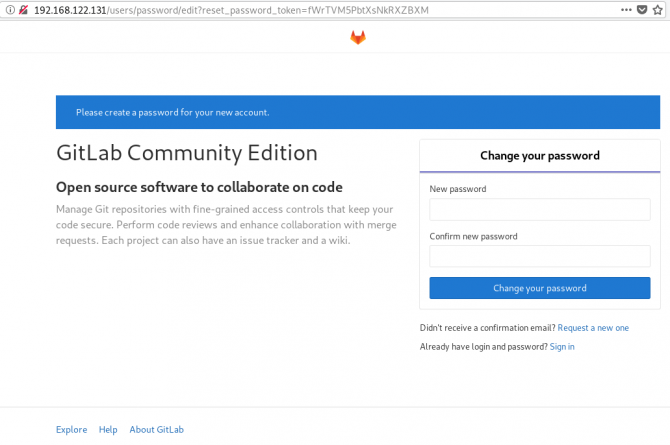

Point your browser to your gitlab installation:

http://192.168.122.131/this is how it looks the first time:

and your first action is to Create a new password by typing a password and hitting the Change your password button.

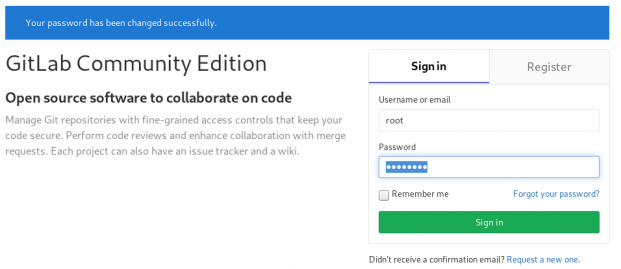

Login

First Page

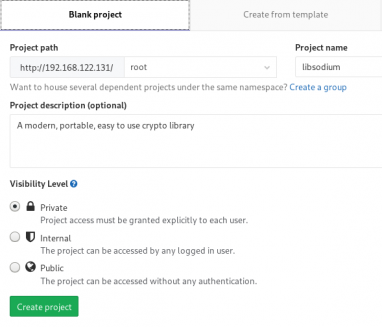

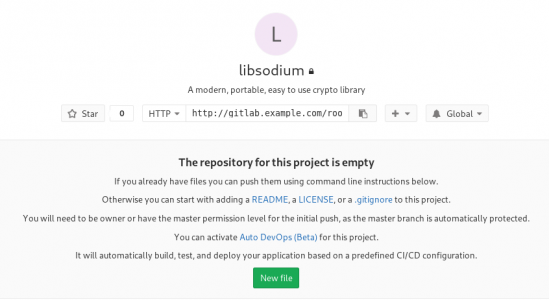

New Project

I want to start this journey with a simple-to-build project, so I will try to build libsodium,

a modern, portable, easy to use crypto library.

New project --> Blank projectI will use this libsodium.spec file as the example for the CI/CD.

Docker

The idea is to build out custom rpm package of libsodium for CentOS 6, so we want to use docker containers through the gitlab CI/CD. We want clean & ephemeral images, so we will use containers as the building enviroments for the GitLab CI/CD.

Installing docker is really simple.

Installation

# yum -y install docker Run Docker

# systemctl restart docker

# systemctl enable dockerDownload image

Download a fresh CentOS v6 image from Docker Hub:

# docker pull centos:6 Trying to pull repository docker.io/library/centos ...

6: Pulling from docker.io/library/centos

ca9499a209fd: Pull complete

Digest: sha256:551de58ca434f5da1c7fc770c32c6a2897de33eb7fde7508e9149758e07d3fe3View Docker Images

# docker imagesREPOSITORY TAG IMAGE ID CREATED SIZE

docker.io/centos 6 609c1f9b5406 7 weeks ago 194.5 MBGitlab Runner

Now, it is time to install and setup GitLab Runner.

In a nutshell this program, that is written in golang, will listen to every change on our repository and run every job that it can find on our yml file. But lets start with the installation:

# curl -s 'https://packages.gitlab.com/install/repositories/runner/gitlab-runner/config_file.repo?os=centos&dist=7&source=script' \

-o /etc/yum.repos.d/gitlab-runner.repo

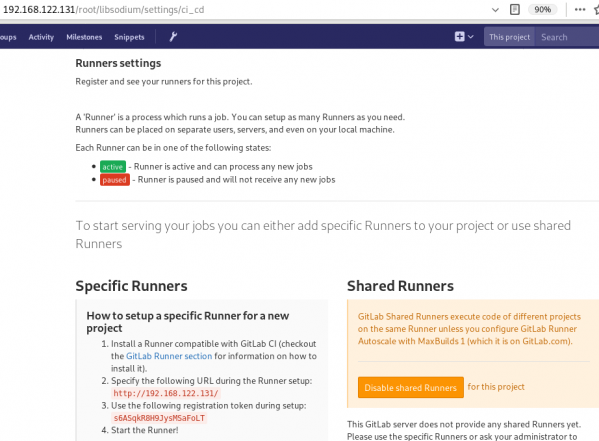

# yum -y install gitlab-runnerGitLab Runner Settings

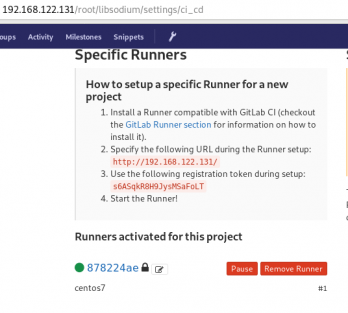

We need to connect our project with the gitlab-runner.

Project --> Settings --> CI/CDor in our example:

http://192.168.122.131/root/libsodium/settings/ci_cd

click on the expand button on Runner’s settings and you should see something like this:

Register GitLab Runner

Type into your terminal:

# gitlab-runner registerfollowing the instructions

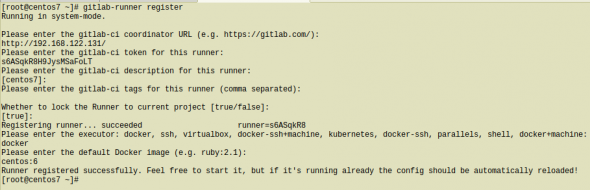

[root@centos7 ~]# gitlab-runner register

Running in system-mode.

Please enter the gitlab-ci coordinator URL (e.g. https://gitlab.com/):

http://192.168.122.131/

Please enter the gitlab-ci token for this runner:

s6ASqkR8H9JysMSaFoLT

Please enter the gitlab-ci description for this runner:

[centos7]:

Please enter the gitlab-ci tags for this runner (comma separated):

Whether to lock the Runner to current project [true/false]:

[true]:

Registering runner... succeeded runner=s6ASqkR8

Please enter the executor: docker, ssh, virtualbox, docker-ssh+machine, kubernetes, docker-ssh, parallels, shell, docker+machine:

docker

Please enter the default Docker image (e.g. ruby:2.1):

centos:6

Runner registered successfully. Feel free to start it, but if it's running already the config should be automatically reloaded!

[root@centos7 ~]#

by refreshing the previous page we will see a new active runner on our project.

The Docker executor

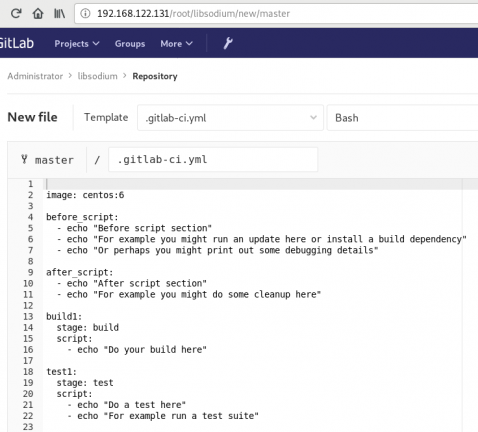

We are ready to setup our first executor to our project. That means we are ready to run our first CI/CD example!

In gitlab this is super easy, just add a

New file --> Template --> gitlab-ci.yml --> based on bashDont forget to change the image from busybox:latest to centos:6

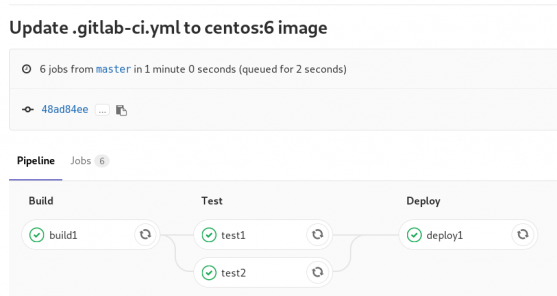

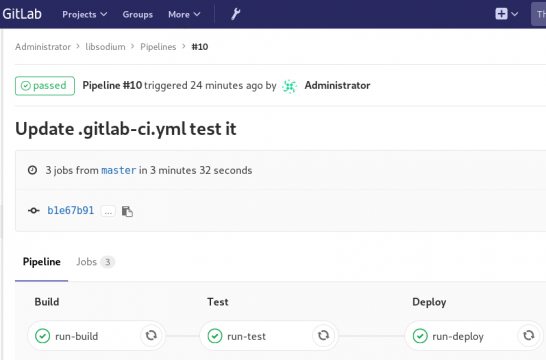

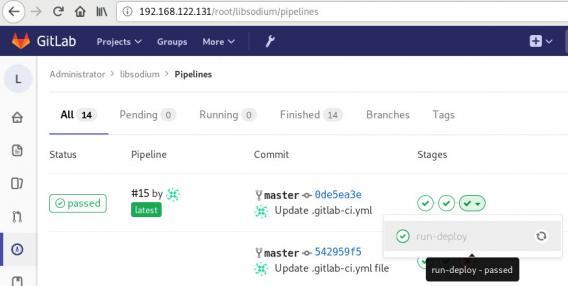

that will start a pipeline

GitLab Continuous Integration

Below is a gitlab ci test file that builds the rpm libsodium :

.gitlab-ci.yml

image: centos:6

before_script:

- echo "Get the libsodium version and name from the rpm spec file"

- export LIBSODIUM_VERS=$(egrep '^Version:' libsodium.spec | awk '{print $NF}')

- export LIBSODIUM_NAME=$(egrep '^Name:' libsodium.spec | awk '{print $NF}')

run-build:

stage: build

artifacts:

untracked: true

script:

- echo "Install rpm-build package"

- yum -y install rpm-build

- echo "Install BuildRequires"

- yum -y install gcc

- echo "Create rpmbuild directories"

- mkdir -p rpmbuild/{BUILD,RPMS,SOURCES,SPECS,SRPMS}

- echo "Download source file from github"

- curl -s -L https://github.com/jedisct1/$LIBSODIUM_NAME/releases/download/$LIBSODIUM_VERS/$LIBSODIUM_NAME-$LIBSODIUM_VERS.tar.gz -o rpmbuild/SOURCES/$LIBSODIUM_NAME-$LIBSODIUM_VERS.tar.gz

- rpmbuild -D "_topdir `pwd`/rpmbuild" --clean -ba `pwd`/libsodium.spec

run-test:

stage: test

script:

- echo "Test it, Just test it !"

- yum -y install rpmbuild/RPMS/x86_64/$LIBSODIUM_NAME-$LIBSODIUM_VERS-*.rpm

run-deploy:

stage: deploy

script:

- echo "Do your deploy here"GitLab Artifacts

Before we continue I need to talk about artifacts

Artifacts is a list of files and directories that we produce at stage jobs and are not part of the git repository. We can pass those artifacts between stages, but you have to remember that gitlab can track files that only exist under the git-clone repository and not on the root fs of the docker image.

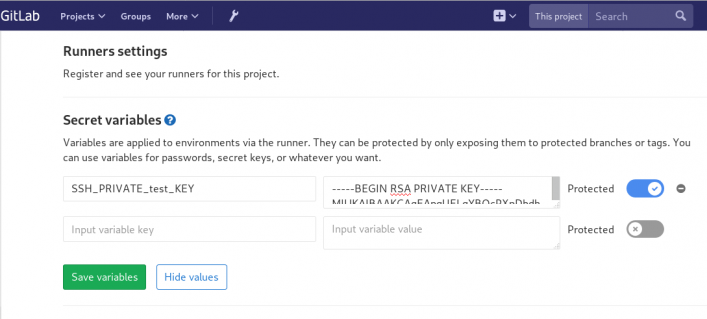

GitLab Continuous Delivery

We have successfully build an rpm file!! Time to deploy it to another machine. To do that, we need to add the secure shell private key to gitlab secret variables.

Project --> Settings --> CI/CDstage: deploy

Lets re-write gitlab deployment state:

variables:

DESTINATION_SERVER: '192.168.122.132'

run-deploy:

stage: deploy

script:

- echo "Create ssh root directory"

- mkdir -p ~/.ssh/ && chmod 700 ~/.ssh/

- echo "Append secret variable to the ssh private key file"

- echo -e "$SSH_PRIVATE_test_KEY" > ~/.ssh/id_rsa

- chmod 0600 ~/.ssh/id_rsa

- echo "Install SSH client"

- yum -y install openssh-clients

- echo "Secure Copy the libsodium rpm file to the destination server"

- scp -o StrictHostKeyChecking=no rpmbuild/RPMS/x86_64/$LIBSODIUM_NAME-$LIBSODIUM_VERS-*.rpm $DESTINATION_SERVER:/tmp/

- echo "Install libsodium rpm file to the destination server"

- ssh -o StrictHostKeyChecking=no $DESTINATION_SERVER yum -y install /tmp/$LIBSODIUM_NAME-$LIBSODIUM_VERS-*.rpm

and we can see that our pipeline has passed!

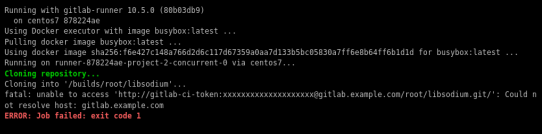

Possible Problems:

that will probable fail!

because our docker images don’t recognize gitlab.example.com.

Disclaimer: If you are using real fqdn - ip then you will probably not face this problem. I am referring to this issue, only for people who will follow this article step by step.

Easy fix:

# export -p EXTERNAL_URL="http://192.168.122.131" && yum -y reinstall gitlab-ceGitLab Runner

GitLab Runner is not running !

# gitlab-runner verify

Running in system-mode.

Verifying runner... is alive runner=e9bbcf90

Verifying runner... is alive runner=77701bad

# gitlab-runner status

gitlab-runner: Service is not running.

# gitlab-runner install -u gitlab-runner -d /home/gitlab-runner/

# systemctl is-active gitlab-runner

inactive

# systemctl enable gitlab-runner

# systemctl start gitlab-runner

# systemctl is-active gitlab-runner

active

# systemctl | egrep gitlab-runner

gitlab-runner.service loaded active running GitLab Runner

# gitlab-runner status

gitlab-runner: Service is running!

# ps -e fuwww | egrep -i gitlab-[r]unner

root 5116 0.4 0.1 63428 16968 ? Ssl 07:44 0:00 /usr/bin/gitlab-runner run --working-directory /home/gitlab-runner/ --config /etc/gitlab-runner/config.toml --service gitlab-runner --syslog --user gitlab-runner

Encrypted files in Dropbox

As we live in the age of smartphones and mobility access to the cloud, the more there is the need to access our files from anywhere. We need our files to be available on any computer, ours (private) or others (public). Traveling with your entire tech equipment is not always a good idea and with the era of cloud you dont need to bring everything with you.

There are a lot of cloud hosting files providers out there. On wikipedia there is a good Comparison of file hosting services article you can read.

I’ve started to use Dropbox for that reason. I use dropbox as a public digital bucket, to store and share public files. Every digital asset that is online is somehow public and only when you are using end-to-end encryption then you can say that something is more secure than before.

I also want to store some encrypted files on my cloud account, without the need to trust dropbox (or any cloud hosting file provider for that reason). As an extra security layer on top of dropbox, I use encfs and this blog post is a mini tutorial of a proof of concept.

EncFS - Encrypted Virtual Filesystem

(definition from encfs github account)

EncFS creates a virtual encrypted filesystem which stores encrypted data in the rootdir directory and makes the unencrypted data visible at the mountPoint directory. The user must supply a password which is used to (indirectly) encrypt both filenames and file contents.

That means that you can store your encrypted files somewhere and mount the decrypted files on folder on your computer.

Disclaimer: I dont know how secure is encfs. It is an extra layer that doesnt need any root access (except the installation part) for end users and it is really simple to use. There is a useful answer on stackexchange that you night like to read .

For more information on enfs you can also visit EncFS - Wikipedia Page

Install EncFS

-

archlinux

$ sudo pacman -S --noconfirm encfs -

fedora

$ sudo dnf -y install fuse-encfs -

ubuntu

$ sudo apt-get install -y encfs

How does Encfs work ?

- You have two(2) directories. The source and the mountpoint.

- You encrypt and store the files in the source directory with a password.

- You can view/edit your files in cleartext, in the mount point.

-

Create a folder inside dropbox

eg./home/ebal/Dropbox/Boostnote -

Create a folder outside of dropbox

eg./home/ebal/Boostnote

both folders are complete empty.

- Choose a long password.

just for testing, I am using a SHA256 message digest from an image that I can found on the internet!

eg.sha256sum /home/ebal/secret.png

that means, I dont know the password but I can re-create it whenever I hash the image.

BE Careful This suggestion is an example - only for testing. The proper way is to use a random generated long password from your key password manager eg. KeePassX

How does dropbox works?

The dropbox-client is monitoring your /home/ebal/Dropbox/ directory for any changes so that can sync your files on your account.

You dont need dropbox running to use encfs.

Running the dropbox-client is the easiest way, but you can always use a sync client eg. rclone to sync your encrypted file to dropbox (or any cloud storage).

I guess it depends on your thread model. For this proof-of-concept article I run dropbox-client daemon in my background.

Create and Mount

Now is the time to mount the source directory inside dropbox with our mount point:

$ sha256sum /home/ebal/secret.png |

awk '{print $1}' |

encfs -S -s -f /home/ebal/Dropbox/Boostnote/ /home/ebal/Boostnote/Reminder: EncFs works with absolute paths!

Check Mount Point

$ mount | egrep -i encfsencfs on /home/ebal/Boostnote type fuse.encfs

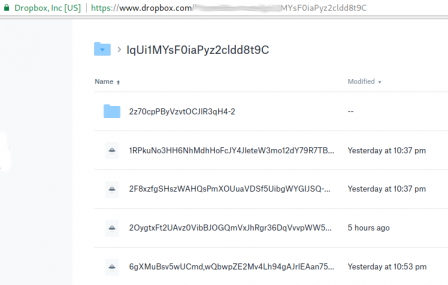

(rw,nosuid,nodev,relatime,user_id=1001,group_id=1001,default_permissions)View Files on Dropbox

Files inside dropbox:

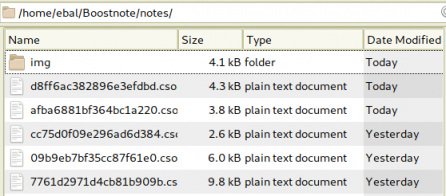

View Files on the Mount Point

Unmount EncFS Mount Point

When you mount the source directory, encfs has an option to auto-umount the mount point on idle.

Or you can use the below command on demand:

$ fusermount -u /home/ebal/BoostnoteOn another PC

The simplicity of this approach is when you want to access these files on another PC.

dropbox-client has already synced your encrypted files.

So the only thing you have to do, is to type on this new machine the exact same command as in Create & Mount chapter.

$ sha256sum /home/ebal/secret.png |

awk '{print $1}' |

encfs -S -s -f /home/ebal/Dropbox/Boostnote/ /home/ebal/Boostnote/Android

How about Android ?

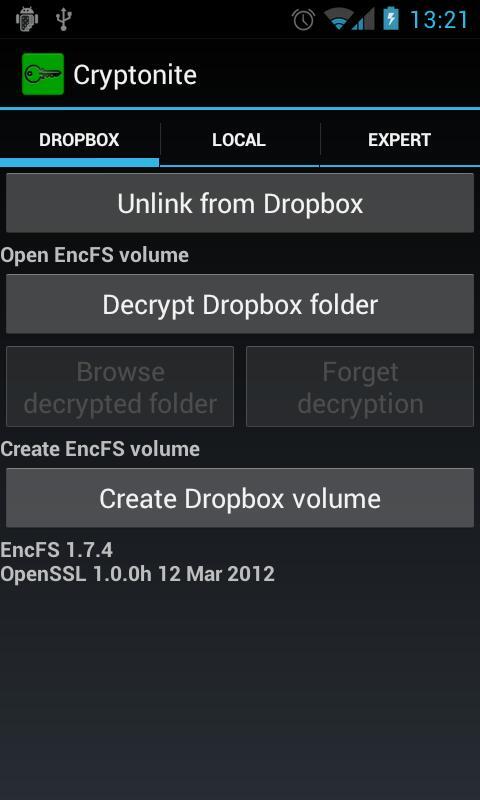

You can use Cryptonite.

Cryptonite can use EncFS and TrueCrypt on Android and you can find the app on Google Play