In the last few months of this year, a business question exists in all our minds:

-Can we reduce Cost ?

-Are there any legacy cloud resources that we can remove ?

The answer is YES, it is always Yes. It is part of your Technical Debt (remember that?).

In our case we had to check a few cloud resources, but the most impressive were our Object Storage Service that in the past we were using Buckets and Objects as backup volumes … for databases … database clusters!!

So let’s find out what is our Techinical Debt in our OBS … ~ 1.8PB . One petabyte holds 1000 terabytes (TB), One terabyte holds 1000 gigabytes (GB).

We have confirmed with our colleagues and scheduled the decomissions of these legacy buckets/objects. We’ve noticed that a few of them are in TB sizes with million of objects and in some cases with not a hierarchy structure (paths) so there is an issue with command line tools or web UI tools.

The problem is called LISTING and/or PAGING.

That means we can not list in a POSIX way (nerdsssss) all our objects so we can try delete them. We need to PAGE them in 50 or 100 objects and that means we need to batch our scripts. This could be a very long/time based job.

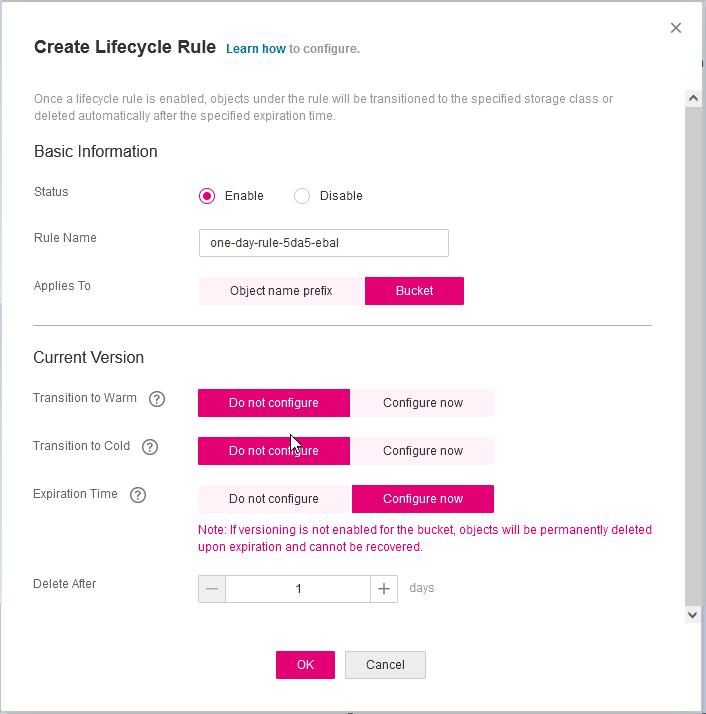

Then after a couple days of reading manuals (remember these ?), I found that we can create a Lifecycle Policy to our Buckets !!!

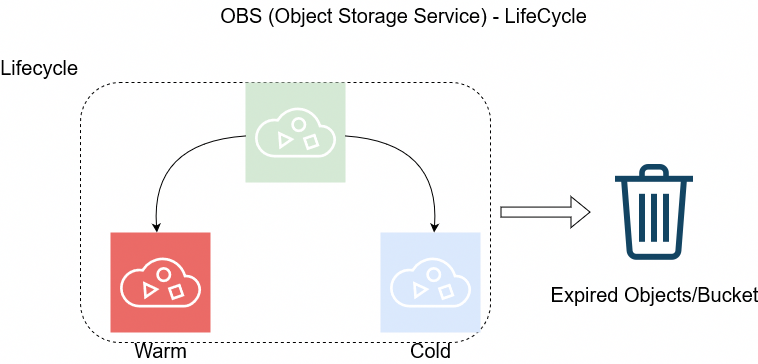

But before going on to setup the Lifecycle policy, just a quick reminder how the Object Lifecycle works

The objects can be in Warm/Cold or in Expired State as buckets support versioning. This has to do with the retention policy of logs/data.

So in order to automatically delete ALL objects from a bucket, we need to setup up the Expire Time to 1 day.

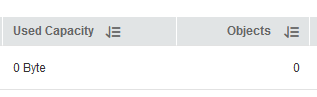

Then you have to wait for 24h and next day

yehhhhhhhhhhhh !

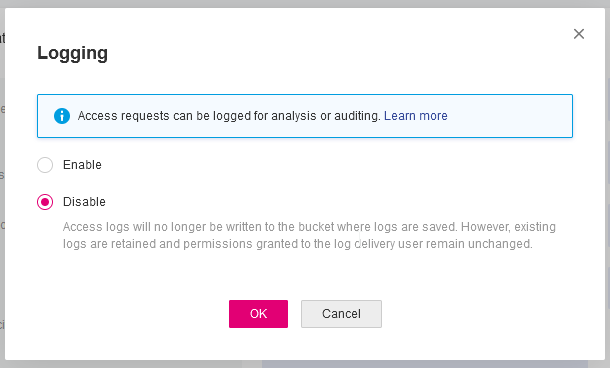

PS. Caveat Remember BEFORE all that, do disable Logging as the default setting is to log every action to a local log path, inside the Bucket.